AI Agent Frameworks in 2026: 8 SDKs, ACP, and the Trade-offs Nobody Talks About

A comparison of 8 agent frameworks in 2026: provider-native SDKs vs independent frameworks, MCP/A2A protocol adoption, and the trade-offs that matter in production.

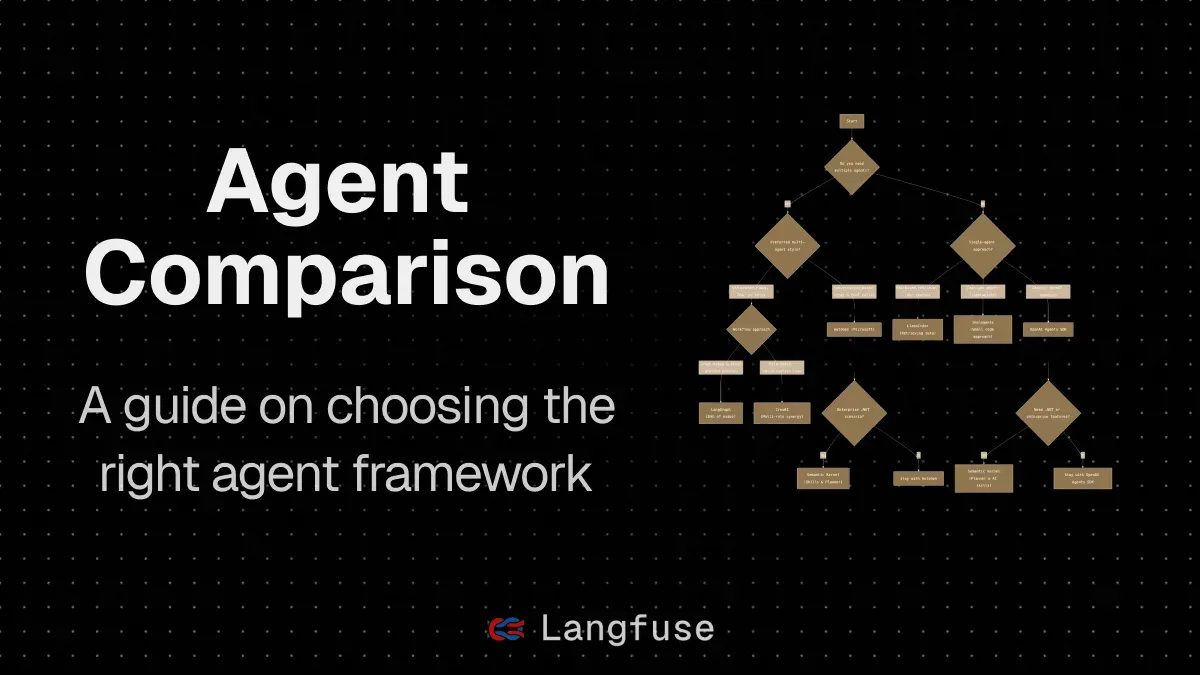

The agent framework landscape in 2026 is starting to look less like a frontier and more like an industry. A new comparison from MorphLLM maps eight major SDKs — Claude Agent SDK, OpenAI Agents SDK, Google ADK, LangGraph, CrewAI, Smolagents, Pydantic AI, and AutoGen — across the dimensions that actually matter for production systems: multi-agent support, protocol compatibility with MCP and A2A, and language coverage beyond Python. The takeaway isn't a clean winner. It's a useful split between provider-native SDKs, which offer depth of integration at the cost of lock-in, and independent frameworks, which keep you flexible at the cost of integration work you have to do yourself.

One signal worth paying attention to: ACP, the protocol that tried to solve agent-to-agent communication before A2A existed, has been merged into A2A under Linux Foundation governance. That matters because it suggests the industry is actually standardizing rather than fragmenting — the same Foundation that steward Linux is now stewarding the protocol that agents will use to talk to each other. MCP crossed 200 server implementations, which is a meaningful threshold for a protocol that didn't exist two years ago. If you're building agent systems today and not thinking about which protocols to adopt, you're accumulating technical debt faster than you think.

The comparison gets into the architectural trade-offs that hello-world tutorials never show. Provider-native SDKs like Claude's and OpenAI's are optimized for their own models first, which means you get tighter tool integration and lower latency for common patterns — but you're building on a foundation that might not transfer if your model strategy changes. Independent frameworks like LangGraph and CrewAI are model-agnostic by design, which matters if you're running a mix of providers or expect to switch as the model market evolves. Neither approach is wrong. The mistake is not knowing which tradeoff you're making when you pick one.

What the piece doesn't answer — and nobody really has yet — is how to evaluate these frameworks on operational characteristics that matter at scale: cold start latency under concurrent load, memory behavior with long-running agent state, debugging and observability tooling maturity. Those are the questions that hit you around the third month of a production deployment, not the first week. The framework that wins the benchmark comparisons today might not be the one that's easiest to debug at 2am when your agent stack is behaving unexpectedly. That's not a criticism of this comparison — it's an honest acknowledgment of where the industry still has work to do.