Codex CLI 0.120.0 Turns Background Agents Into a First-Class Terminal Workflow

OpenAI’s latest Codex CLI release matters for a boring reason, which is exactly why it matters. Terminal agents stop feeling clever the moment they start stepping on their own state: one task is running, a second prompt gets lost, hook output becomes a wall of noise, and you are left wondering whether the tool is actually working or just thinking theatrically. Codex CLI 0.120.0 is a real product update because it tackles that layer instead of hiding behind another model-version headline.

The headline feature in the April 11 release is that Realtime V2 now streams background-agent progress while work is still running, and queues follow-up responses until the active response completes. Those sound like implementation details. They are not. They are the minimum viable ergonomics for anyone trying to use a coding agent as a background worker instead of a one-shot chatbot.

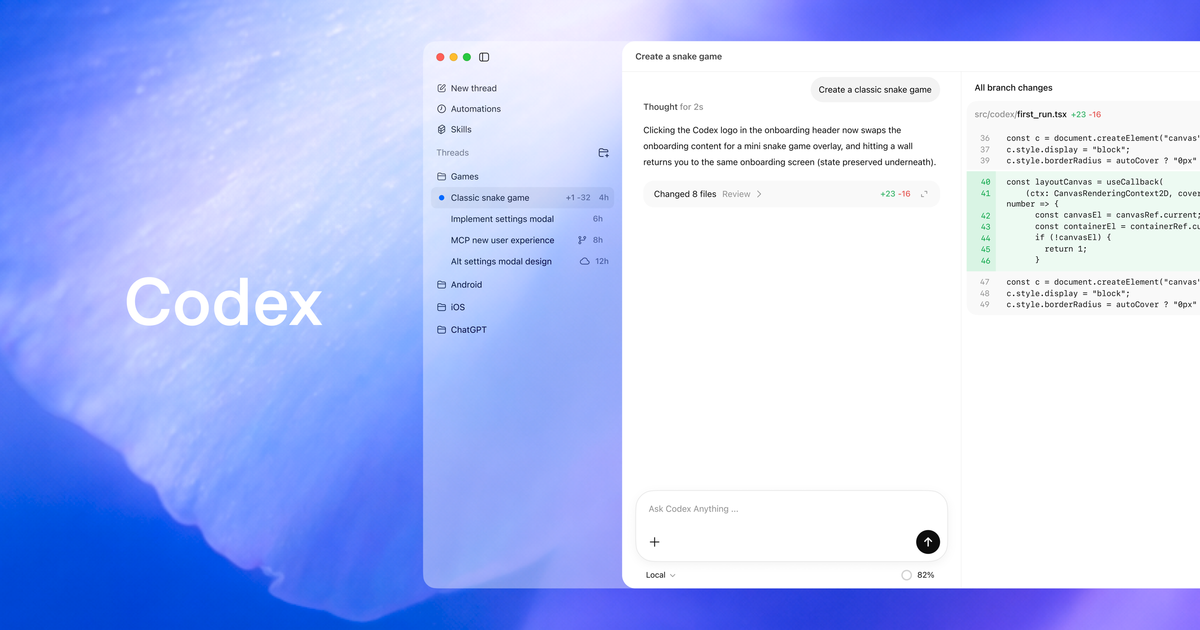

OpenAI tied those changes to PRs #17264 and #17306, which is useful because it shows this is not marketing fog. There is also a revealing rename in the release notes: Realtime V2’s tool is now called background_agent. That naming shift says a lot. OpenAI is not merely polishing a terminal assistant. It is making a claim about the core unit of work in Codex: an agent that continues operating while you supervise, interrupt, or stack additional instructions around it.

The rest of the release supports the same interpretation. Hook activity in the TUI is easier to scan, with live hooks shown separately and completed hook output suppressed unless it is useful. Code-mode tool declarations now include MCP outputSchema details, which means structured tool output has a better chance of being treated like typed data instead of glorified prose. SessionStart hooks can distinguish between fresh startup, resume sessions, and sessions created by /clear. None of this is flashy, but all of it is what happens when a team is trying to make a long-running agent feel inspectable rather than mystical.

If you compare this to the April 10 0.119.0 release, the pattern gets clearer. That earlier drop pushed voice defaults onto the newer WebRTC path, expanded MCP support, improved remote and app-server workflows, added better TUI notifications, and sped up stale rate-limit refreshes. One day later, 0.120.0 followed with background-progress streaming, response queueing, Guardian review plumbing, and more Windows sandbox fixes. That is not random patch churn. It looks like OpenAI is tightening the loop around orchestration, safety, and multi-step sessions.

The terminal-agent market is finally being forced to care about state management

This is the part too many AI coding demos skip. Generating code is table stakes now. The harder problem is session control. If an agent cannot tell you what it is doing while it runs, you will interrupt it too early. If it cannot accept a follow-up cleanly, you will either wait around or spawn duplicate work. If hooks and tool outputs are unreadable, you stop trusting the automation layer and fall back to copy-paste supervision.

Codex 0.120.0 is OpenAI admitting, in code, that agent UX is becoming a scheduling problem. That puts it in the same lane as GitHub’s recent Copilot cloud-agent work and the broader shift toward background tasks, branch-based delegation, and implementation planning. The difference is that OpenAI is trying to make the background model native inside the terminal itself, not just a handoff to a separate cloud workflow.

There is also a security story buried here. The release includes fixes for Windows elevated sandbox handling with split filesystem policies, symlinked writable-root permission handling, and a TLS panic in remote websocket sessions. Combined with the current Codex approvals and security docs, which stress no-network defaults, OS-enforced sandboxing, protected paths like /.git and /.codex, and approval gates for network or out-of-workspace actions, the product direction looks pretty clear: more autonomy, but with increasingly explicit containment.

That matters because the agent market is running into the same wall everywhere. Builders want longer-running automation. Security teams want narrower blast radiuses. The product that wins is not the one that says “trust me,” it is the one that makes trust reviewable. Progress streaming, transcript deltas for Guardian follow-ups, stable Guardian review IDs, and typed tool outputs all push in that direction.

Why developers should care before they care about the version number

If you use Codex casually, this release will feel incremental. If you use it as a real tool, it changes daily friction in ways that compound. Being able to see progress from a background task means you spend less time context-switching into blind faith. Queued follow-ups mean you can keep driving the session instead of waiting for a clean prompt boundary. Better hook rendering means you can tell whether local automation is doing useful work or just generating visual entropy.

For teams experimenting with MCP-backed workflows, the outputSchema improvement is especially worth watching. Typed tool results are how agent systems stop hallucinating glue code between integrations. If you want reliable structured outputs from tools, schema visibility is not optional. It is the difference between “the model probably understood this blob” and “the agent has a declared contract for what the tool returns.”

The Windows fixes also deserve more attention than they will get. Too much coding-agent software still behaves as if Windows users should either tolerate second-class support or quietly move into WSL and pretend the host OS is irrelevant. OpenAI keeps spending release budget on Windows sandboxing and permissions, which suggests it understands the enterprise truth most AI-native startups postpone learning: the platform story is not complete until the awkward machines work too.

The practical move for developers is simple. If Codex is part of your daily terminal workflow, upgrade and test three things on purpose: background progress visibility during longer tasks, follow-up message behavior while a task is active, and any MCP tools that return structured data. If you are on Windows, add a permissions sanity check to that list, especially for symlink-heavy repos or workflows that rely on elevated sandbox paths. And if you lead a team evaluating terminal agents, stop comparing only raw coding quality. Start comparing how each tool handles background work, approvals, state collisions, and reviewability. That is where the product category is actually being decided.

OpenAI did not ship a glamorous release here. It shipped something better: evidence that Codex is being rebuilt around background work as a first-class idea. The terminal-agent market has been promising “delegate and supervise” for months. This is what it looks like when one of the major vendors starts doing the plumbing required to make that promise less fake.

Sources: OpenAI Codex changelog, openai/codex release 0.120.0, Codex agent approvals and security docs