Gemini 3.1 Flash Live Launches on AI Studio for Real-Time Voice & Vision Agents

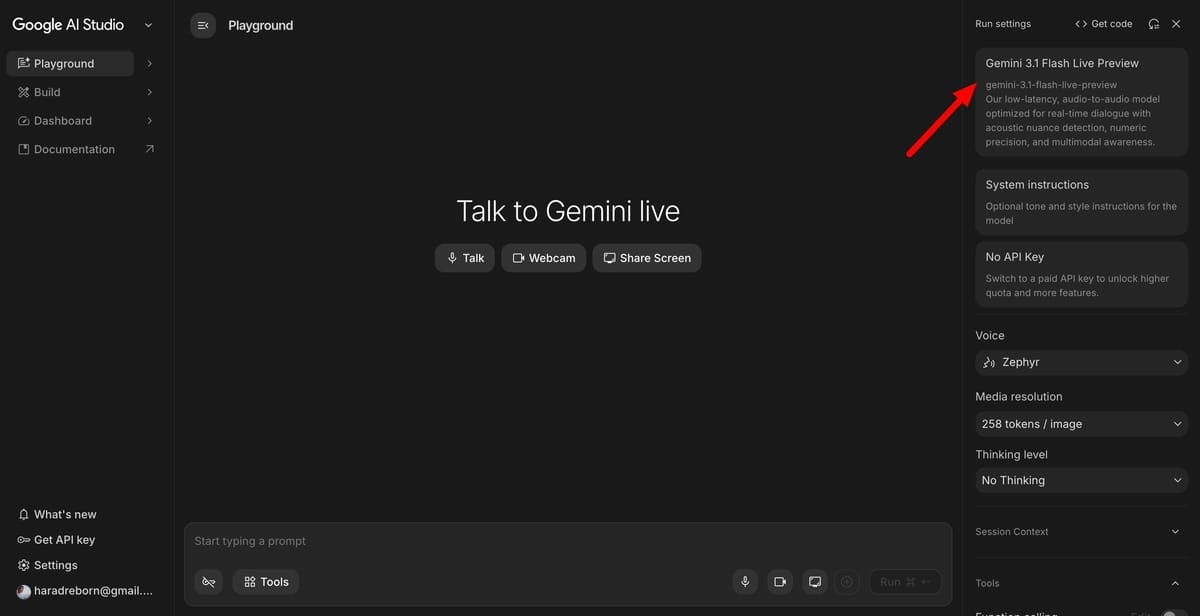

Google launched Gemini 3.1 Flash Live on March 26, 2026, and it's a meaningful step forward for developers building real-time conversational and multimodal applications. Available now in AI Studio and the Gemini app, the model is purpose-built for low-latency audio and voice interactions — the kind where a half-second delay breaks the illusion of natural dialogue. Google says it handles noisy environments better than previous versions and completes tasks more reliably when audio quality isn't perfect, which matters a lot for real-world deployments.

The model is accessible via the Gemini API, making it relatively straightforward to wire into voice agents, live customer support tools, or anything that needs to see and hear simultaneously. Live video analysis is explicitly in scope, which opens up use cases like real-time coaching, accessibility tools, and interactive video experiences that feel genuinely responsive rather than lagged.

For developers who've been waiting for a production-ready, fast multimodal model they can actually ship to users, Gemini 3.1 Flash Live looks like the most capable option Google has offered for this category. The competition in real-time voice AI is intensifying quickly, and Google's move to make this available broadly through AI Studio is a clear signal it wants Gemini to be the default infrastructure for the next wave of live AI applications.