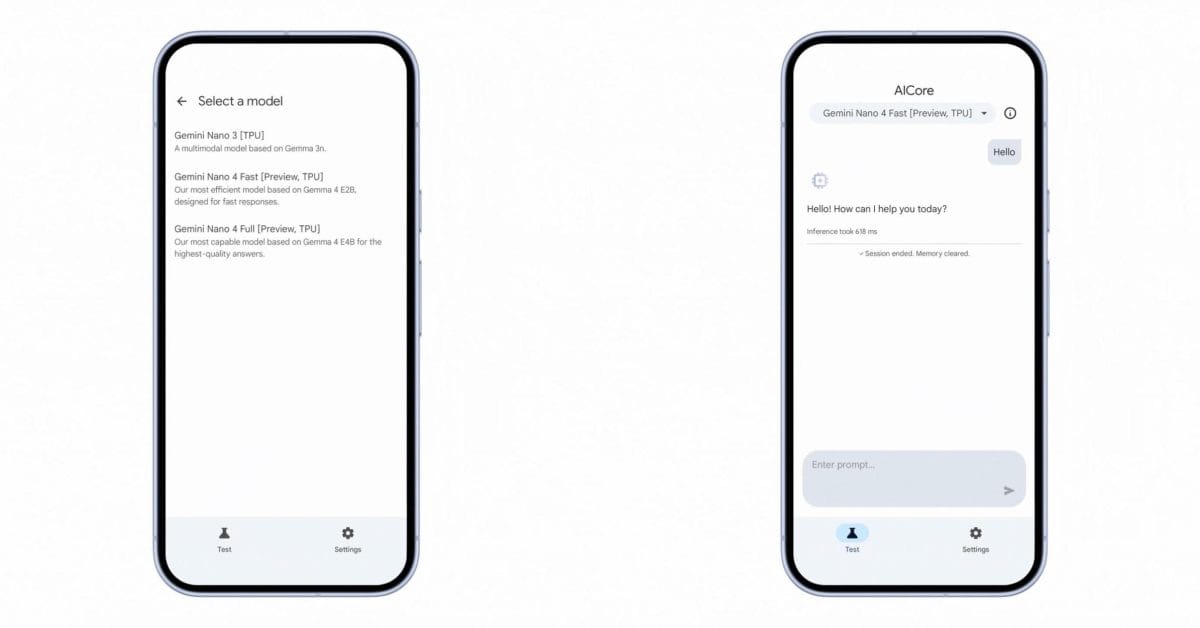

Gemini Nano 4 Arrives for Android AICore: 4x Faster, 60% Less Battery, Based on Gemma 4

Google's on-device AI strategy is hitting a meaningful inflection point with the preview of Gemini Nano 4 for Android AICore. Built on the new Gemma 4 architecture, Nano 4 ships in two variants—Nano 4 Fast using a 2-billion-parameter distilled version of Gemma 4, delivering roughly three times the speed of its predecessor, and Nano 4 Full using the full 4-billion-parameter model for highest reasoning quality. Both variants are designed to run locally on Android hardware without cloud round-trips, and both claim substantial efficiency gains: up to four times faster execution and sixty percent less battery drain compared to Nano 3.

The practical consequences of that efficiency jump are significant. On-device AI at this performance level opens the door to sophisticated features—chain-of-thought reasoning for content moderation, time-aware reasoning tied to calendar and reminder contexts, improved math accuracy, and native multimodal understanding across text, image, and audio—all without burning through a phone's battery or requiring a network connection. Gemini Nano 3 was capable, but Nano 4's efficiency-to-performance ratio is a different class entirely. It means mid-range Android hardware can now run AI features that previously required flagship specs or cloud processing.

Native support for more than 140 languages strengthens Google's global positioning, particularly in markets where persistent connectivity isn't a given. Developers can sign up for the AICore Developer Preview now, which will be the path for bringing these capabilities into production applications. With the AI assistant market tightening across both mobile and desktop, Google's bet on increasingly capable on-device models is a direct response to the reality that cloud dependency has real limits—latency, privacy, and connectivity all create friction that purely local inference eliminates.