Grok Triggered Alarming CSAM Warning for Innocent Family Photos — xAI Quietly Revised It

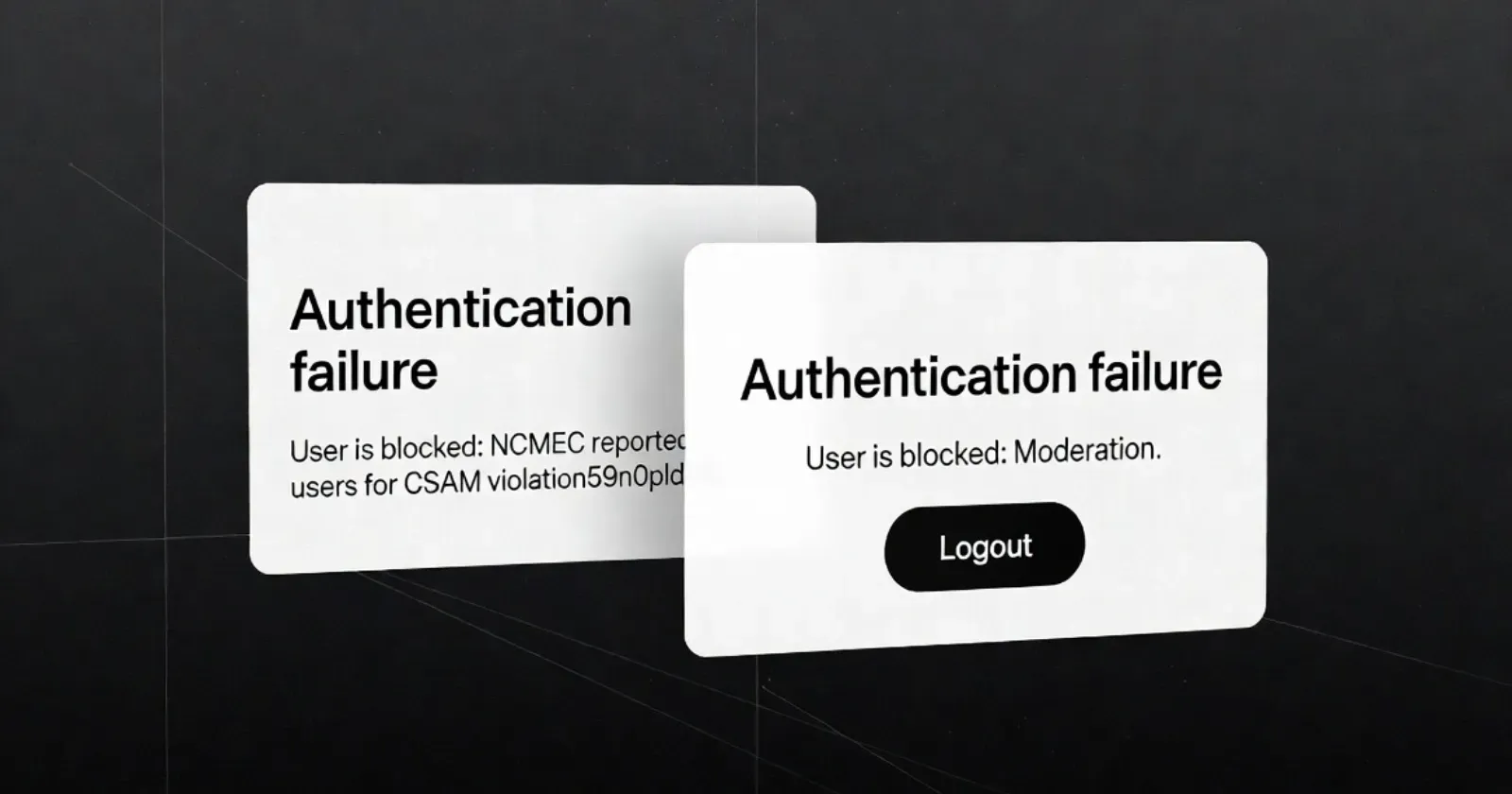

A content moderation mishap put xAI in an uncomfortable spotlight this week after Grok users reported receiving alarming safety warnings that referenced CSAM and the National Center for Missing and Exploited Children — triggered by entirely innocent requests. The false positives most commonly appeared when users asked Grok to edit family photos, such as removing children from an image background. Instead of a proportionate response, the system surfaced language typically associated with serious criminal content violations, leaving users shocked and confused.

The incident highlights the real-world cost of blunt-instrument safety filters. Overly aggressive moderation that conflates benign requests with harmful content doesn't just inconvenience users — it generates reputationally damaging false associations for a platform actively courting enterprise customers and regulatory goodwill. By April 3rd, xAI had quietly revised the warning language to remove the NCMEC and CSAM references, but made no public announcement about the change.

The silent rollback is itself worth scrutiny. At a moment when xAI is seeking enterprise credibility and building toward a high-profile public offering, the combination of a harmful false positive incident and a low-transparency fix raises legitimate questions about the company's approach to safety communication. Users and advocates are increasingly expecting not just fixes, but accountability — and a quiet edit to a warning message is unlikely to satisfy either.