MiniMax M2.7 — The $0.30 Model That Matched Claude Opus 4.6

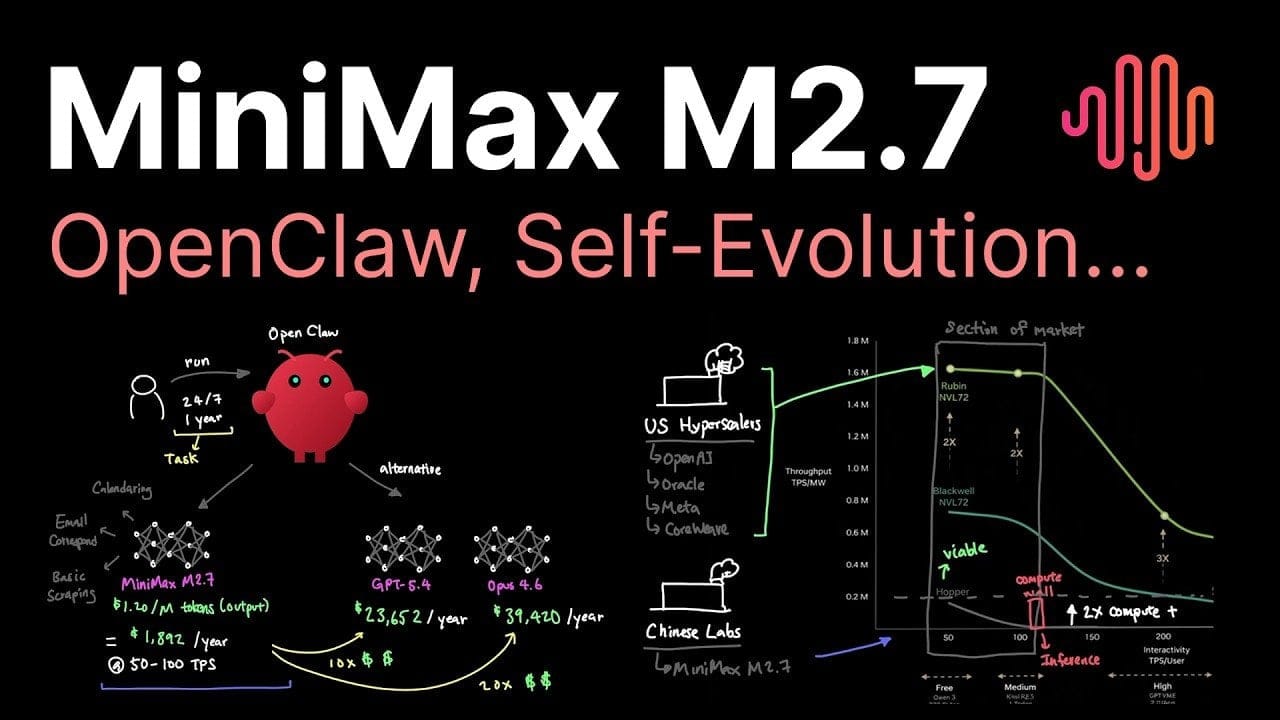

The AI model landscape just got a serious shakeup. MiniMax has released M2.7, a 230-billion-parameter model that goes toe-to-toe with Claude Opus 4.6 on leading benchmarks — and does it for roughly $0.30 per million tokens. That's not a typo. For teams watching their inference bills, this is the kind of number that rewrites project plans.

Beyond the price tag, M2.7 brings some genuinely useful specs to the table: a 200,000-token context window, processing speeds of 50–100 tokens per second, and a design philosophy optimized for older GPU architectures. Annual operational cost lands around $2,000, and early adopters report 30–50% reductions in manual ML workflow effort. It's built for agentic pipelines and privacy-sensitive local deployments — the workloads where sending data to a cloud API isn't always an option.

The bigger story here is structural. The AI market is splitting in two: premium frontier models from the usual suspects, and a growing tier of cost-efficient challengers — many of them from Chinese labs — that are increasingly hard to dismiss on performance grounds alone. M2.7 is a clean example of that second tier growing up fast.