Stop Using AGENTS.md and CLAUDE.md — Research Says You're Probably Doing It Wrong

AGENTS.md and CLAUDE.md have become standard practice in AI-assisted development — the go-to mechanism for telling a coding agent what it needs to know about your project. A controlled study across real repository benchmarks now complicates that assumption. Developer-written context files produced a modest performance improvement. LLM-generated context files produced a modest performance degradation. Both configurations increased token usage and inference cost relative to no context file at all. The mechanism for the degradation was consistent: auto-generated files grow long and repetitive, duplicating information already available in the README and creating attention dilution rather than useful signal. The "let your agent write its own CLAUDE.md" pattern — popular precisely because it requires no effort — is specifically the pattern most likely to hurt quality.

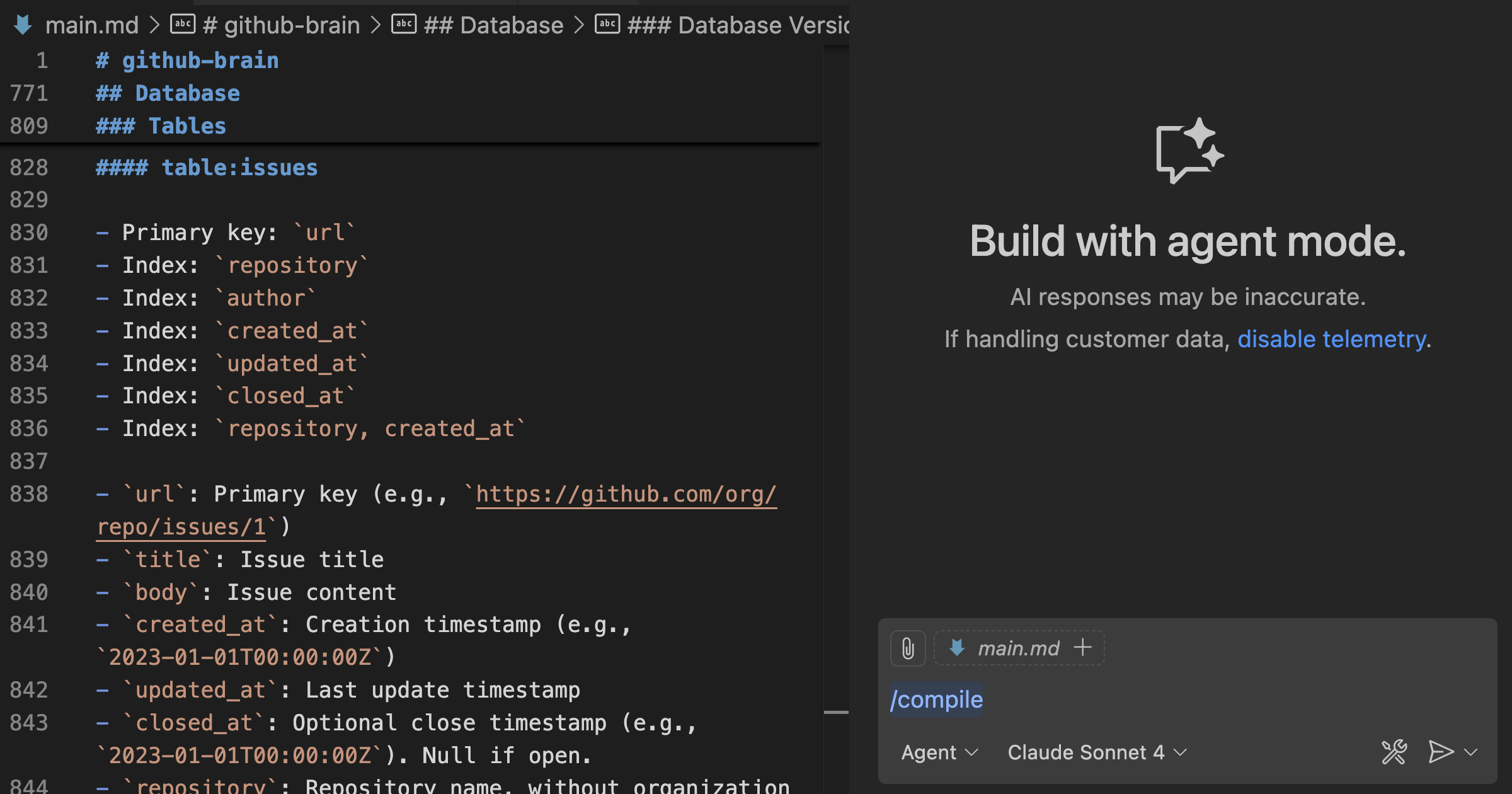

The practical implications are immediate for anyone running Claude Code, Codex, or Cursor on a project with context files. If your CLAUDE.md or AGENTS.md was generated automatically, or has grown through accumulation rather than deliberate authorship, you are probably adding cost while subtly degrading output. The quality bar the research implies is precise: short, focused, limited to instructions a developer would intentionally write, and excluding anything already documented in the README. The file should contain agent-specific behavioral constraints — not project documentation that the agent could discover on its own.

Alex's analysis at ZazenCodes walks through a live comparison on a newsletter feature implementation to make the performance difference concrete, and synthesizes the research findings into an actionable review checklist. For teams that have invested in context engineering, this is a useful calibration: the investment pays off when the files are written with discipline, and quietly erodes quality when they are not.