Your Cloud Architecture Is Fighting Your Coding Agent — Here's How to Fix It

Most engineers troubleshooting friction with AI coding agents have been looking in the wrong place. The instinct is to sharpen prompts, tweak system instructions, or switch models. But AWS Solutions Architects are making a different argument: the friction is architectural. Traditional cloud infrastructure was designed for human development cadences — slow deploy cycles, manual testing gates, infrequent change — and those assumptions create hard ceilings on what coding agents can accomplish without constant hand-holding.

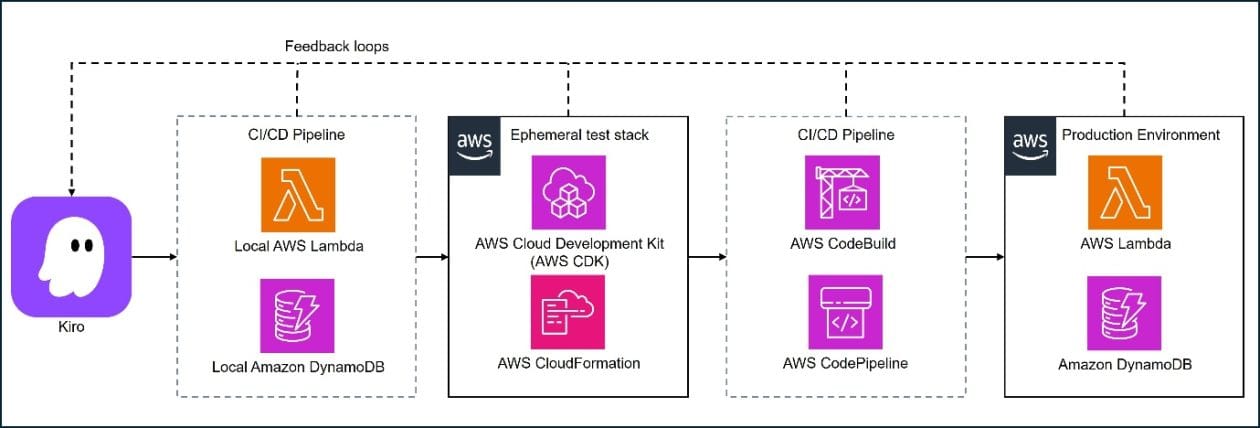

The proposed fix operates at two levels. At the system layer, the recommendation is to treat local emulation as the default agent feedback path — using tools like LocalStack, AWS SAM Local, and DynamoDB Local to shrink the validation loop from minutes (a real cloud round-trip) to seconds (a local container). Ephemeral test stacks provide cloud-parity validation without the latency and cost of deploying to real infrastructure on every iteration. At the codebase layer, the changes are subtler but equally impactful: consistent project structures, inline documentation that explains why code exists (not just what it does), and named function boundaries that give agents navigable intent rather than opaque blobs of logic.

The core reframe here matters: "The solution is not better prompts — it's an architecture that treats fast feedback and clear boundaries as first-class concerns." Agents don't get tired of waiting for a four-minute CI run the way a human developer eventually learns to batch changes. They just accumulate uncertainty. Cloud engineers who want to get real productivity from coding agents need to think of fast feedback loops and legible codebases as infrastructure decisions, not nice-to-haves.