Codex CLI 0.121.0 Is What a Serious Agent Tool Looks Like When It Stops Chasing Demos

OpenAI’s most important Codex release this week was not the one with the flashy browser, computer-use demo, and “work OS” ambition. It was the quieter follow-up the day before. Codex CLI 0.121.0 reads like infrastructure, and that is exactly why it matters. Once a coding agent graduates from novelty to daily tool, the differentiator stops being whether it can impress you in a ten-minute demo and starts being whether it can survive the dull, hostile, very real conditions of software teams: plugin sprawl, sandbox weirdness, state drift, Windows path nonsense, memory that needs boundaries, and security teams that ask annoying but entirely reasonable questions.

The official changelog for 0.121.0 is long in the way good tooling releases are long. OpenAI added marketplace installation support from GitHub, git URLs, local directories, and direct marketplace.json sources. It expanded MCP and plugin support with MCP Apps tool calls, namespaced registration, parallel-call opt-in, and sandbox-state metadata. It added memory controls in both the TUI and app server, improved prompt history with Ctrl+R reverse search, shipped a secure devcontainer profile with bubblewrap support, and hardened release plumbing with pinned GitHub Actions, dependency constraints, and release-build verification in CI. If you are looking for the one shiny bullet point, you are reading it wrong. The story here is systems maturity.

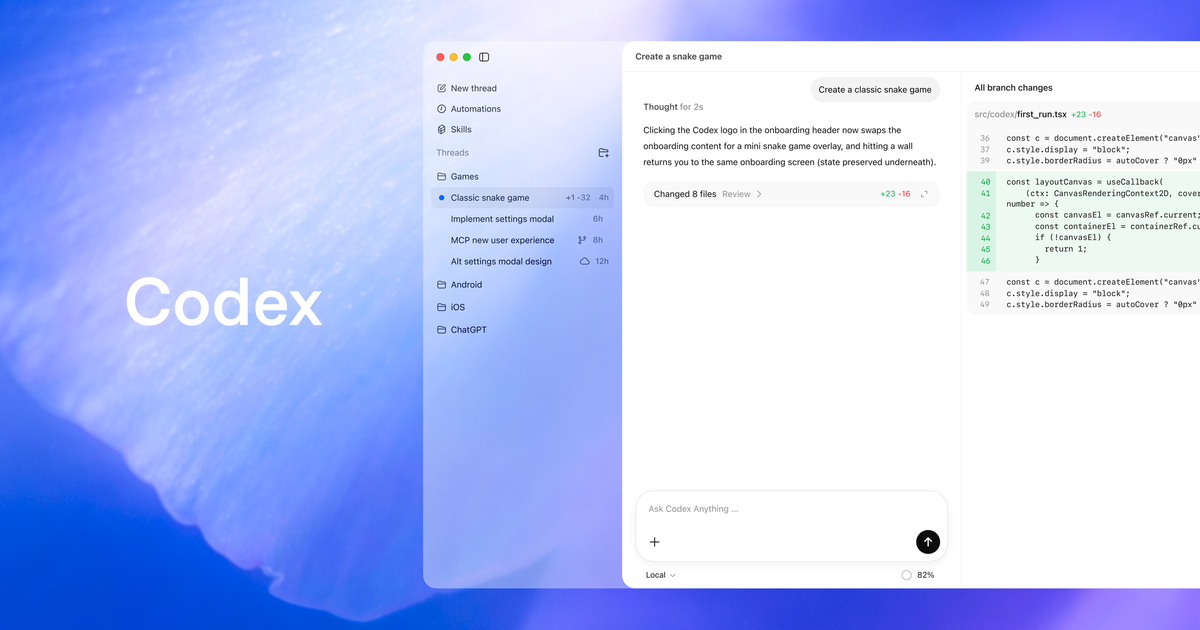

The marketplace work is the clearest signal that OpenAI wants Codex to be more than a single binary sitting alone in a terminal. A serious agent ecosystem needs distribution. It needs a way for skills, app integrations, and MCP servers to move from “clever setup in one engineer’s dotfiles” to something a team can actually reuse. OpenAI’s plugin docs make that ambition explicit: plugins bundle skills, apps, and MCP servers into reusable workflows, with examples like Gmail, Google Drive, and Slack. In other words, Codex is no longer only about generating code inside the repo. It is trying to become a routing layer across the surrounding work.

That matters because the coding-agent market is quietly shifting from model competition to workflow competition. A year ago, the sales pitch was basically, “our model is better at code.” That still matters, obviously. But once Codex, Copilot CLI, Claude Code, and the rest all cross a competence threshold, the argument moves up the stack. Can the tool install capabilities cleanly? Can it keep state across sessions? Can it interoperate with external systems without feeling like a science project? Can you explain its security posture to a platform team without sounding like you joined a cult? Version 0.121.0 is OpenAI working on that layer.

The memory controls are a good example. Memory is easy to market and hard to govern. OpenAI’s Codex memory docs are refreshingly sober about it. Memories are off by default, unavailable in some regions at launch, stored as generated local state under ~/.codex/memories/, and not meant to replace checked-in instructions like AGENTS.md. That is the correct framing. Stable project rules belong in auditable files. Memory is useful for durable preferences, repeated workflow context, and accumulated local knowledge that would otherwise need to be re-explained every session. The 0.121.0 release matters because it adds controls for memory mode, reset, deletion, and cleanup. Translation: OpenAI knows “helpful recall” becomes “creepy or unreliable hidden state” unless users can inspect and govern it.

The sandbox and devcontainer work is even more important than the marketplace story, because this is where enterprise adoption usually goes to die. Bubblewrap support in a secure devcontainer profile sounds obscure until you realize what it implies. OpenAI is not just trying to make Codex powerful. It is trying to make Codex deployable in environments where “just give it full access” is not an acceptable answer. The same goes for the macOS Unix socket allowlists, private DNS fixes, Windows cwd and session matching fixes, and the cleanup around Guardian timeouts versus policy denials. Those are not cosmetic bug fixes. They are the product learning how to speak the language of real operational boundaries.

There is also a subtler product unification story running through this release. The app-server updates, marketplace plumbing, memories, MCP expansion, filesystem metadata work, and instruction-source exposure all point in the same direction: OpenAI does not see Codex CLI as a separate side project for terminal romantics. It sees Codex as one product family expressed through several clients. The app gets the headlines. The CLI gets the boring upgrades that make the whole thing durable. If you are evaluating OpenAI’s strategy, that is the right split. Attention comes from visible features. Retention comes from the stuff nobody screenshots.

Developers should read this release with two practical questions in mind. First, does this change make Codex easier to operationalize inside a team? Yes. Marketplace installs reduce bespoke setup. Memory controls make local recall safer to experiment with. MCP namespacing and metadata make integrations less hand-wavy. Security hardening and release verification reduce the number of awkward “trust us” conversations. Second, does it change what you should do this week? Also yes. If you are piloting Codex, this is the moment to stop treating it like a solo productivity toy and start testing it like infrastructure.

That means a few concrete things. Put stable instructions in AGENTS.md or checked-in docs, not in vibes. Try plugins and MCP integrations, but do it with the same discipline you would apply to adding CI secrets or OAuth scopes. Use memory only where the recall benefit is obvious and the blast radius is low. Test the secure devcontainer path before some enthusiastic engineer quietly normalizes danger-full-access workflows across your org. And if your team runs mixed operating systems, especially Windows, pay attention to the fixes around session and filesystem behavior. Cross-platform reliability is where a lot of “works great for me” agent tooling falls apart.

The competitive angle is straightforward. GitHub is making Copilot CLI more orchestrator-shaped. Anthropic keeps leaning into deep autonomous terminal work. Cursor owns more of the visible GUI workflow. OpenAI’s answer is increasingly to make Codex broader, more connected, and more governable. That is a defensible strategy, but only if the boring foundation keeps improving. Version 0.121.0 suggests OpenAI understands that the path to owning developer workflow does not run through another sizzle reel. It runs through distribution, state management, sandboxing, and supply-chain hygiene.

That is not glamorous. It is also how software earns the right to stick around.

Sources: OpenAI Codex changelog, GitHub release: rust-v0.121.0, OpenAI Codex plugins docs, OpenAI Codex memories docs