Microsoft Wants Azure Agent Hosting to Stop Looking Like Kubernetes with Extra AI Branding

Microsoft keeps making the same argument about enterprise AI from three different angles, which is usually how you can tell the company actually means it. First it pushed Foundry as the control plane. Then it pushed MCP as the tool boundary. Now it is pushing Hosted Agents as the answer to an uglier operational question: what happens after a team builds an agent and realizes it has accidentally signed up to run a platform?

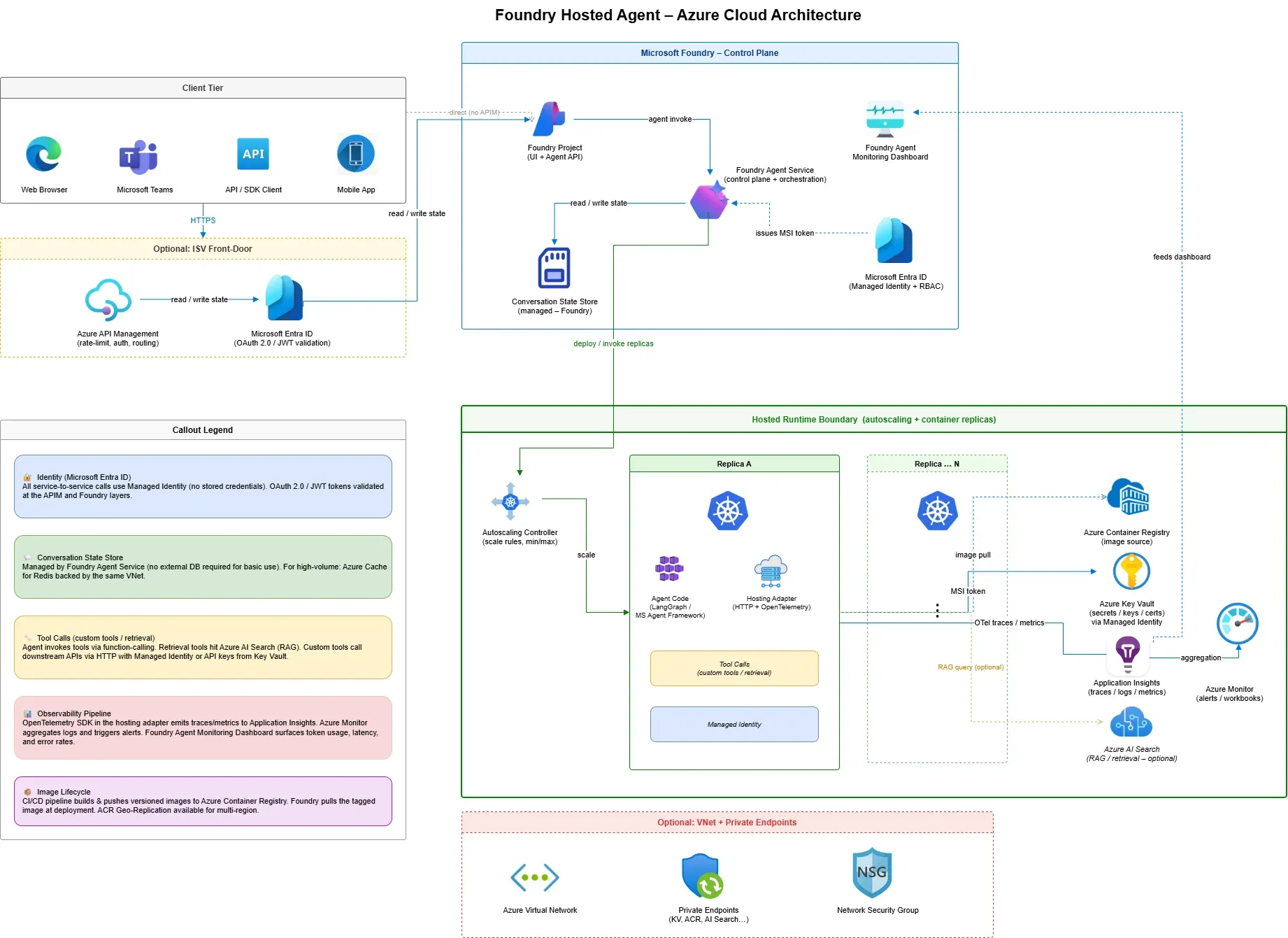

That is the real story behind Microsoft’s new post on Foundry Hosted Agents. On paper, this looks like another Azure hosting explainer. In practice, it is a bid to turn agent deployment into a managed middle layer that sits between do-it-yourself infrastructure and no-code copilots. Microsoft explicitly compares six hosting options, from Azure Functions and App Service to Container Apps, AKS, Foundry Agents, and Foundry Hosted Agents. When a vendor publishes a comparison table like that, it is not just educating customers. It is trying to define the market category on its own terms.

The interesting detail is where Hosted Agents sits in that matrix. Microsoft describes it as a way to run containerized agent applications on Foundry Agent Service with agent-native abstractions, a managed lifecycle, and built-in OpenTelemetry observability, while still letting teams bring their own framework. LangGraph is supported. Microsoft Agent Framework is supported. Custom code is supported. That matters because most platform teams do not want the operational burden of AKS for every agent workload, but they also do not want to throw away existing application code just to fit inside a more opinionated no-code agent shell.

Microsoft is also being fairly concrete about the runtime envelope. The post lists resource shapes ranging from 0.25 CPU and 0.5 GiB of memory up to 4 CPU and 8 GiB, with scale-to-zero available through min-replicas: 0 and a preview max of 5 replicas. The hosting adapter handles protocol translation, conversation management, streaming, and telemetry, and local development can run on localhost:8088. In other words, Microsoft is trying to reduce the number of boring-but-essential things every agent team has to build for itself before security and operations will take the project seriously.

The platform fight is moving above the model layer

That shift matters more than the product branding. For the last year, AI platform competition has been narrated like a model arms race: context windows, benchmark screenshots, price cuts, and catalog breadth. But most enterprises are not blocked on whether they can access another model. They are blocked on whether they can host, monitor, govern, and publish agent systems without turning every deployment into a bespoke reliability project.

Hosted Agents is Microsoft’s answer to that bottleneck. The service effectively says: keep your preferred workflow framework, keep writing code, keep containers if you need them, but stop rebuilding the scaffolding around conversations, streaming, response formatting, and observability. That is a much smarter value proposition than “please migrate your entire stack into our one blessed framework.” Enterprises hate ideological rewrites. They like operational shortcuts that do not force them to abandon prior decisions.

This is also where the surrounding Microsoft documentation becomes more important than the announcement post itself. Microsoft Learn already has separate preview readmes for LangGraph adapters and Agent Framework adapters, and the Agent 365 documentation frames Microsoft Agent 365 as an IT admin control plane for registry, access control, visualization, interoperability, and security. The doc set also makes clear that publishing a Foundry hosted agent into the Microsoft 365 ecosystem creates a dedicated agent identity and may require reworking RBAC after publication. That is the kind of detail you only document when you expect real customers to trip over real governance issues.

The strategic implication is obvious. Microsoft does not just want to sell models and inference endpoints. It wants to own the operational seam where agent code becomes a governed enterprise workload. If Azure can become the default place where agent apps are deployed, observed, and then published into Copilot, Teams, or other business surfaces, the platform gets stickier for reasons that have nothing to do with winning a headline benchmark on a given Tuesday.

Boring infrastructure is the point, not a flaw

There is a broader lesson here for builders. A lot of agent discourse still treats infrastructure as a secondary concern, something you handle after the clever orchestration logic works. That is backward. Infrastructure is where most enterprise AI projects either become software or remain demos. You can have a beautiful multi-agent flow on a whiteboard and still fail to ship because nobody trusts the lifecycle, the telemetry is too generic, or publishing into downstream channels turns identity into a mess.

Hosted Agents is Microsoft admitting that reality out loud. The promise is not that Azure will make agent logic smarter. The promise is that Azure will make the operational contract around that logic less painful. That is exactly the kind of product decision enterprise customers tend to reward.

That said, nobody should confuse preview polish with production certainty. Microsoft’s own post notes that private networking is not there yet, and the current scaling limits are modest. Teams evaluating this should pressure-test cold starts under scale-to-zero, long-running workflows, streaming behavior under load, and the boundary between project identity and published agent identity. If compliance, networking, or data residency requirements are strict, you still need to know where the managed abstraction stops and your platform obligations start.

But even with those caveats, the direction is correct. The future of enterprise agents probably does not look like every company operating tiny bespoke Kubernetes platforms with extra AI branding layered on top. It looks more like managed hosting surfaces that preserve framework choice while absorbing the repetitive platform work. Microsoft seems to understand that earlier than some competitors.

Practically, engineering leaders should treat this launch as a prompt to revisit their own agent architecture. If your team is already building with LangGraph or Microsoft Agent Framework, ask whether the infrastructure you are owning is actually strategic or just inherited toil. If you are still early, decide whether you want maximum infrastructure control or a narrower platform abstraction that may get you to production faster. And if you are in security or platform engineering, pay close attention to the Agent 365 angle. The hosting story is not separate from governance. It is the front door to it.

Microsoft’s strongest Azure AI argument here is not “we have agents too.” It is “we can make the painful middle of agent operations boring.” In enterprise software, that is usually where the money is.

Sources: Microsoft DevBlogs, Microsoft Learn, Microsoft Learn LangGraph adapter docs, Microsoft Learn Agent Framework adapter docs