Microsoft’s Pitch for Agentic Refactoring Is Simple: Put the Coding Agent in a MicroVM and Stop Arguing About YOLO Mode

Most agentic-coding debates are still stuck in the least interesting place possible: whether developers should trust a model enough to let it run with fewer approval prompts. Microsoft’s latest Azure post makes a more useful argument. The real question is not whether you should embrace YOLO mode on your laptop. It is whether you can move the risky parts of autonomous refactoring into an environment where autonomy is finally cheap enough to use and isolated enough to survive.

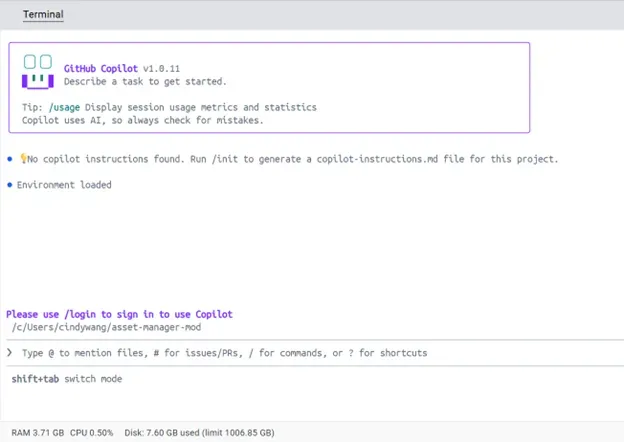

That is the pitch behind pairing GitHub Copilot with Docker Sandbox microVMs for legacy modernization. On the surface, the demo is straightforward: take an old Java application, put it in a container-friendly workflow, let Copilot help refactor and modernize it, and use Docker’s sandboxed environment to keep the blast radius under control. But the strategic point is bigger than a sample app. Microsoft is effectively arguing that the next useful coding-agent pattern is not “agent plus permissions dialog.” It is “agent plus hardened runtime.”

That distinction matters because legacy modernization is exactly the kind of work where coding agents look best in demos and worst in production. The jobs are repetitive, tedious, and structurally expensive: update old dependencies, containerize brittle applications, patch deprecated APIs, add error handling, untangle filesystem assumptions, and run the same test-and-fix loop dozens or hundreds of times. Humans are slow at this because the work is dull. Agents are attractive because they do not get bored. But the moment you let an agent build containers, run dependency updates, and execute test stacks on a real host, the security story turns ugly fast.

Microsoft is refreshingly direct about the worst part. In a normal container setup, many coding-agent workflows end up mounting /var/run/docker.sock so the agent can build images or orchestrate compose stacks. That is not a cute implementation detail. It is effectively handing the agent root-grade control over the host Docker daemon. A compromised tool chain, a malicious package, or a sloppy prompt chain can turn “please modernize this app” into “please enumerate containers, inspect images, and poke at systems the agent was never supposed to see.” If your security model boils down to “well, hopefully the AI behaves,” you do not have a security model.

Docker Sandbox is Microsoft’s proposed escape hatch. Instead of letting the agent touch the host Docker environment, the sandbox gives it a private Docker daemon inside a microVM. The post also highlights bidirectional workspace sync with preserved absolute paths, so the ugly reality of old build scripts and hardcoded filesystem assumptions still works across the isolation boundary. That is more important than it sounds. Legacy code is full of bad habits that are annoying even for humans, and a lot of virtualization setups make them worse by quietly changing path assumptions or file behavior. A secure environment that breaks the build is still a broken workflow.

The interesting part is not the microVM, it is the economics of approval

The sharpest line in the piece is the argument that secure sandboxes let teams run agents in effectively “YOLO mode” without paying the usual approval tax. That is the real product claim. Enterprises do not actually lose most time because models are too slow at generating code. They lose time because every autonomous step that might be destructive needs human supervision, and human supervision does not scale. If you are modernizing a handful of repos, manual approvals are annoying. If you are modernizing hundreds or thousands, they become the bottleneck.

Microsoft says autonomous agents in these workflows merge roughly 60% more pull requests than agents that require constant supervision. That number should be read carefully. It is not proof that any given agent is now trustworthy enough to replace engineering judgment. It is proof that security boundaries change throughput. Once the environment is strong enough, you can let the agent do more work before a human has to intervene. In other words, runtime design is now part of developer productivity.

This is one of the more important shifts happening across the coding-agent market. Vendors spent the last year selling intelligence: better models, better benchmarks, longer context windows, nicer chat surfaces. The next year is going to be about operating conditions. Which agent can run safely against real codebases? Which one can survive dependency churn, build weirdness, and long validation loops? Which one can work in parallel without turning the host machine into an unreviewed security experiment? Microsoft’s post is useful because it stops pretending the hard part is just model IQ.

Legacy modernization is exactly where this approach could stick

There is also a nice strategic fit here. Greenfield coding gets the hype, but legacy modernization is where enterprises actually have budget, pain, and measurable return. Nobody needs an AI agent to make a to-do app in React. Plenty of companies need help dragging a creaky Java or .NET estate into something containerized, testable, and supportable. Those projects are expensive because they combine repetitive edits with enough risk that teams move cautiously. A microVM-backed agent is one of the few plausible ways to push both sides of that equation at once: more automation without pretending security does not matter.

The post’s details reinforce that. Microsoft calls out filtered HTTP and HTTPS access, a smart deny-all policy, blocked local-network and cloud-metadata access, and path-preserving sync between host and sandbox. Those are the kinds of controls that make dependency-upgrade and bulk-refactor work less terrifying. They will not solve every problem. They do reduce the chance that a package-install step or integration test quietly turns into data exfiltration. For teams staring at large dependency trees and old infrastructure assumptions, that is not glamorous, but it is useful.

There is a second-order effect here too. Once secure sandboxes become normal, the industry’s tired argument about “should agents have permission to execute commands?” starts to look incomplete. Of course they need permission to execute commands. The better question is: under what boundary, with what network policy, with what audit trail, and with what recovery path? The teams that answer those questions well will get far more value out of coding agents than the teams still arguing about whether an approvals toggle feels scary.

What practitioners should actually do

If you are evaluating coding agents for modernization work, take the hint and stop judging them as standalone chat products. Test them as systems. Can they build and run containers without host-daemon exposure? Can they update dependencies in an environment with outbound filtering? Can they preserve filesystem assumptions well enough for old build scripts to survive? Can they work across multiple repos in parallel without requiring a human to click approve every few minutes?

Just as importantly, separate “agent autonomy” from “host trust.” Those should not be the same dial. Give the agent more freedom inside a runtime that is intentionally constrained. Keep privileged CI, production credentials, and sensitive network paths outside that boundary unless there is a compelling reason not to. The secure default for 2026 is not to neuter the agent. It is to harden the environment.

Microsoft’s post is not the last word on this, and it reads more like an architecture pattern than a finished standard. That is fine. The important thing is the direction of travel. Coding agents are becoming useful enough that the old permission model, endless human approvals on a trusted host, no longer scales. MicroVM-backed sandboxes are one of the first credible answers that do not require pretending the risks are imaginary.

The market has spent months arguing about which coding agent is smartest. Fair enough. But if you are responsible for real software estates, especially old ones, the more consequential question is which environment lets a merely smart-enough agent do useful work safely and repeatedly. On that front, Microsoft’s message is pretty clear: stop debating YOLO mode in the abstract, and start designing runtimes that can contain it.

Sources: Microsoft Azure Blog