NVIDIA’s Factory AI Push Looks More Like Infrastructure Procurement Than Another Robot Demo

NVIDIA’s Hannover Messe announcement reads like a trade-show roundup if you skim it. Read it closely, and it looks more like a procurement memo for the next decade of industrial software. The company is not really selling factories on “AI” in the abstract anymore. It is selling the same thing it has been selling hyperscalers: a full stack, a preferred architecture, and a future in which the safest way to buy capability is to buy more of NVIDIA’s map.

That matters because manufacturing has been one of the easiest places to fake AI progress. A robot arm in a demo cell, a dashboard with a chatbot bolted on, a digital twin nobody trusts once the keynote ends. NVIDIA’s pitch at Hannover is more serious than that. It is trying to turn manufacturing AI into infrastructure, which is both more credible and more strategically aggressive.

This is the cloud playbook wearing steel-toe boots

The most telling detail in the post is not a robot. It is Deutsche Telekom’s Industrial AI Cloud, which NVIDIA describes as one of Europe’s largest AI factories, built in Germany on NVIDIA infrastructure. That is a loaded phrase. “AI factory” is NVIDIA’s favorite way to frame modern compute clusters, because factories imply repeatable output, measurable efficiency, and capital investment rather than speculative experimentation. By moving that language into manufacturing itself, NVIDIA is collapsing the distance between the data center and the plant floor.

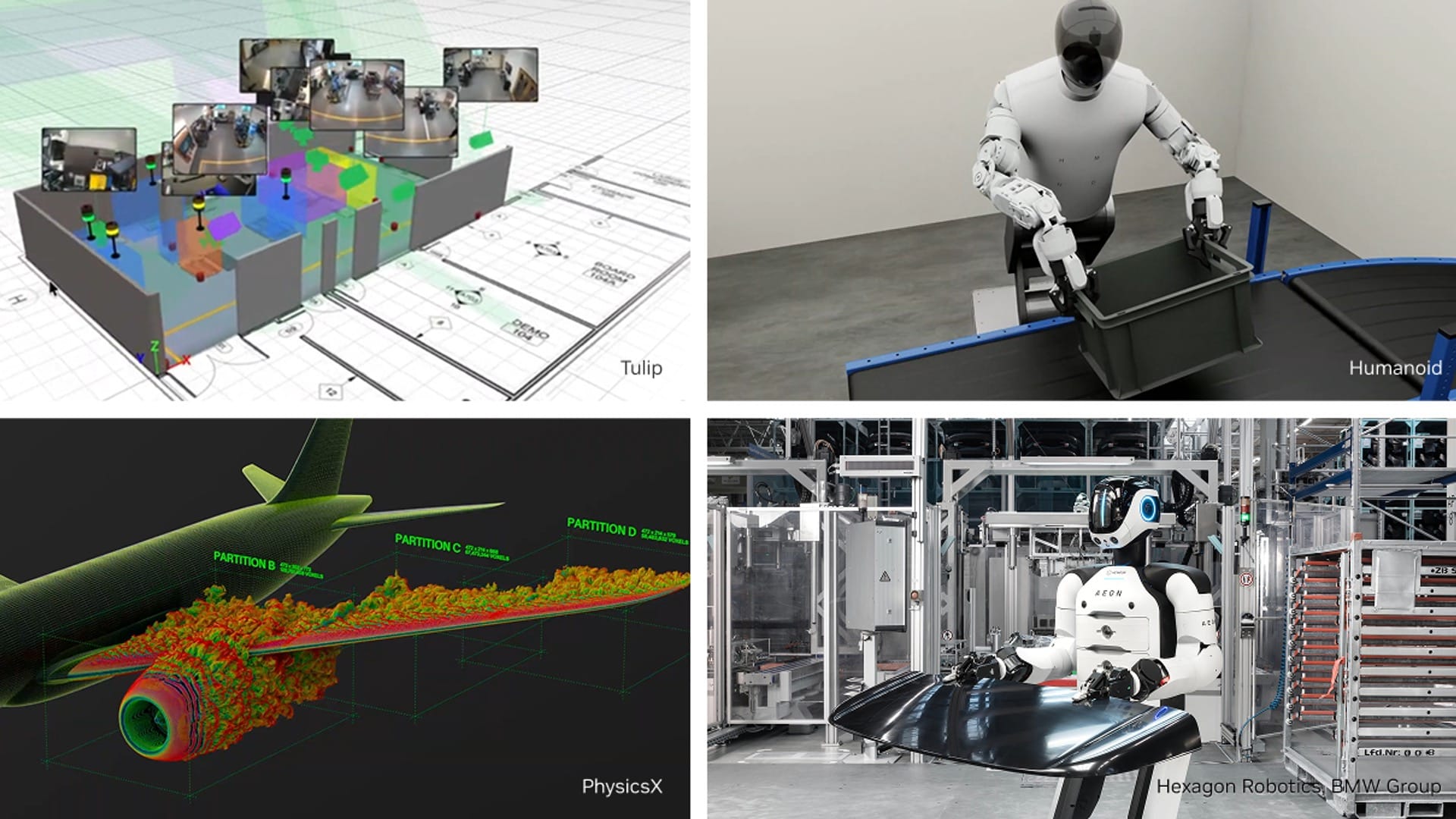

The partner list makes the pattern obvious. Siemens, SAP, PhysicsX, Wandelbots, Agile Robots and EDAG are not being pitched as isolated demos. They are being positioned as workloads that sit on top of a sovereign industrial compute layer. Dell, IBM, Lenovo and PNY show up as the systems vendors. Omniverse and OpenUSD show up as the simulation substrate. Metropolis, Cosmos Reason 2 and Nemotron show up as the perception and reasoning layer. Jetson Thor and IGX Thor cover the edge. QNX OS for Safety 8.0 plus NVIDIA Halos cover the argument that some of this can eventually be trusted near real equipment.

That is not a product launch. It is an attempt to define the reference architecture.

There is a specifically European reason this pitch lands now. “Sovereign” is doing real work here. Manufacturers in Germany and elsewhere in Europe are not eager to pump sensitive production telemetry, design data, quality logs and operational know-how into generic black-box cloud pipelines if they can avoid it. NVIDIA is reading that correctly. If you can offer local or regionally controlled infrastructure, pair it with simulation tools and edge inference, and wrap the whole thing in a compliance-and-safety story, you move AI spending from innovation theater into normal industrial budgeting.

The best numbers in the story are not from NVIDIA

NVIDIA’s own post is broad by design, but the adjacent partner disclosures are where the story becomes concrete. Microsoft says Krones used AI-based fluid simulation inside a digital twin to cut simulation time from four hours to under five minutes. That is roughly a 95% reduction, and more importantly, it changes how often engineers can ask useful questions. A simulation that takes four hours is a scheduled event. One that takes five minutes becomes part of the workflow.

Tulip says Terex expects a 3% yield increase and a 10% reduction in rework from Factory Playback, built with NVIDIA’s video-search-and-summarization blueprint and Cosmos Reason 2. Those are not vanity metrics. Yield and rework are where industrial AI either earns its keep or gets cut at the next budgeting cycle. Invisible AI’s Vision Execution System, already deployed at Toyota according to NVIDIA, pushes the same argument from a different angle: the win is not that a model can identify something in a video stream, it is that the system can structure a production cycle, surface the relevant context before issues compound, and do it in a way operators can use.

Humanoid’s HMND 01 example is the flashiest case, but it is also the easiest to misread. The interesting part is not “humanoid in a factory,” which is catnip for headlines and a poor basis for purchasing decisions. The interesting part is that the company says simulation-first development compressed a process that often takes 18 to 24 months down to seven. That is the industrial AI pattern worth caring about: better simulation, faster iteration, fewer physical mistakes, shorter path from concept to floor.

The market is moving from copilots to closed loops

Here is the deeper shift behind all of this: manufacturing AI is leaving the era of assistant features and entering the era of closed-loop systems. A copilot can summarize a report. A useful industrial system can connect design data, machine telemetry, camera feeds, simulation models and workflow history tightly enough to recommend or trigger action with bounded risk. That requires far more than a good model. It requires data plumbing, real-time context, simulation fidelity, permissions, observability and edge hardware that can survive production environments.

This is why NVIDIA keeps bundling digital twins, vision models, edge compute and safety language into one narrative. It understands that factory AI will not be won by the prettiest demo model. It will be won by whoever reduces integration pain between design software, plant systems and live operations. If Omniverse becomes the place simulations converge, if Metropolis becomes the default video layer, and if Jetson or IGX become the default on-prem inference targets, NVIDIA gets paid every time the workflow matures from pilot to production.

There is also a competitive subtext here. Industrial software incumbents like Siemens, ABB, Dassault and Schneider do not want to become commodity UI layers sitting on top of someone else’s intelligence stack. Hyperscalers would love these workloads too. NVIDIA’s answer is to be indispensable to both camps: partner with the incumbents, leave room for Azure and others, but make the accelerated substrate and the AI tooling hard to remove. It is a smart position because factories do not rip out control systems lightly, and the vendors that shape the first deployable architecture often get years of downstream leverage.

What engineers should do with this

If you build industrial software, robotics systems, plant analytics, or edge vision products, the practical takeaway is not “buy the robot.” It is “audit your architecture.” Specifically:

- Check whether your data model can unify engineering, telemetry and video context, because isolated dashboards will lose to systems that can reason across all three.

- Treat simulation as part of production engineering, not a pre-sales toy. The teams getting real value are shrinking iteration loops, not just improving renders.

- Design for on-prem or sovereign deployment early if you sell into regulated or export-sensitive environments. The trust story is becoming part of the product, not just the contract.

- Measure outcomes in yield, rework, downtime and commissioning time. If your AI story cannot translate into one of those numbers, it is still marketing.

- Pay attention to safety-certified edge stacks. Many pilots die in the gap between model demo and operational approval.

There is still plenty of reason for skepticism. This is a trade-show announcement, and trade-show announcements are professionally engineered to make roadmaps look like reality. NVIDIA supplies enough evidence to make the story plausible, not enough to declare the category solved. We still need to see what these deployments cost, how brittle they are, how much integration labor they require, and whether the promised benefits survive beyond lighthouse customers and curated demos.

But the direction is clear. NVIDIA is no longer pitching manufacturing AI as a collection of cool components. It is pitching a stack that lets manufacturers buy intelligence the way they buy infrastructure: with sovereignty requirements, safety constraints, systems integrators, and a spreadsheet full of throughput assumptions. That is a much more serious market, and it is one NVIDIA is increasingly well positioned to shape.

The old industrial AI dream was a smarter dashboard. The new one is a factory where simulation, perception, reasoning and execution are tightly connected enough to change how work gets done. NVIDIA is betting that if it can own enough of that loop, the robot demo becomes optional. The purchase order does not.

Sources: NVIDIA Blog, Microsoft, Kongsberg Digital, Humanoid