Google’s Real Gemini Story Is Workflow Consolidation, Not Finals Week Tips

Google did not hand the AI press another shiny same-day launch to chase on April 11, which is probably for the best. The more interesting story is the one that only becomes obvious when you line up Google’s recent Gemini releases side by side: notebooks, audio overviews, guided tutoring, live conversation, and now interactive simulations are no longer separate tricks. They are being assembled into a workflow stack. That matters more than any one feature drop because builders rarely lose to a better demo. They lose to a product that becomes the place work actually happens.

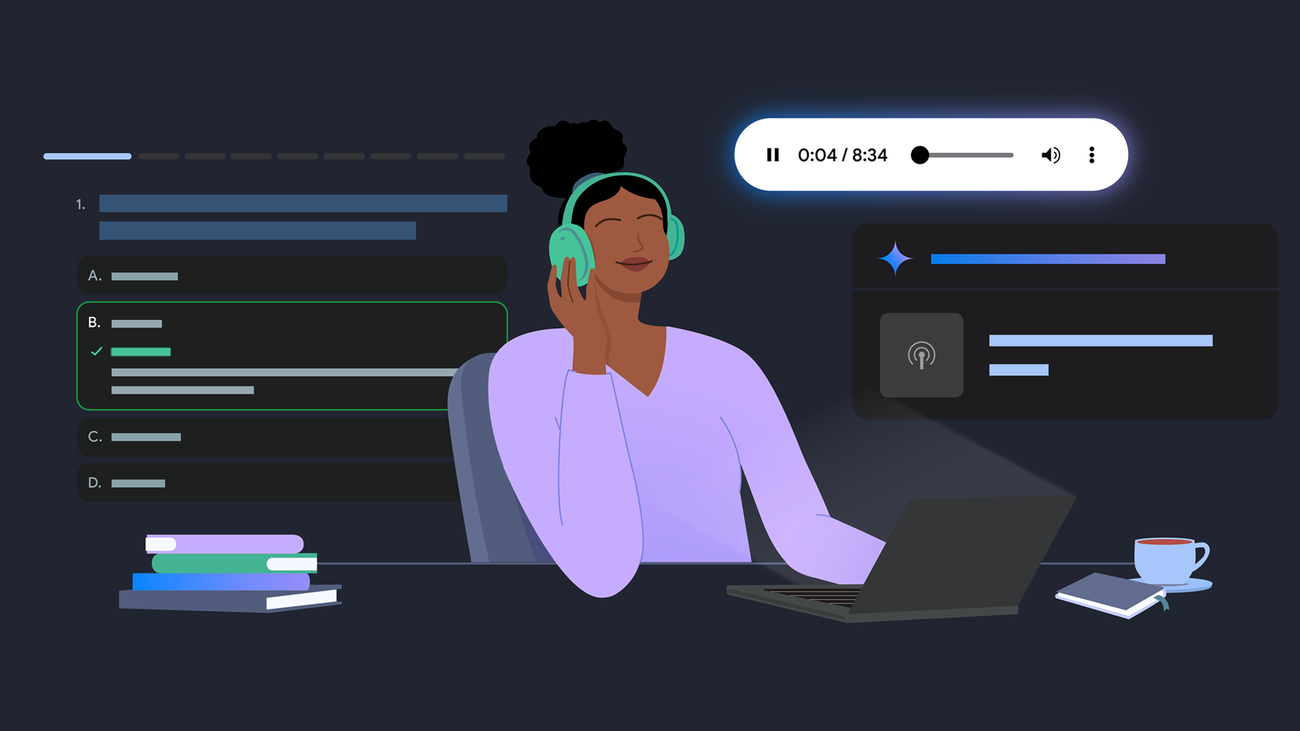

Google’s April 10 post about finals-week study tips reads, on the surface, like polite seasonal content. Underneath, it is a fairly direct roadmap signal. The company tells users to put lecture PDFs, whiteboard photos, class notes, links, and even past chat history into Gemini notebooks, which Google describes as a study command center. Those notebooks sync with NotebookLM, so the same source set can be used across both products. That means a student can ingest course material in Gemini, generate Audio Overviews with the familiar two-host podcast format, move into NotebookLM for video overviews or infographics, then return to Gemini to ask for an essay outline or a quiz on the same corpus.

The new interactive simulations matter here because they close a gap that text-first AI products have struggled with. Google says Gemini can now generate functional visualizations directly in chat, including manipulable systems where users can change variables like gravity and initial velocity to see how an orbit changes. That is a different product category from “here is a diagram.” It nudges Gemini toward exploratory software, not just explanatory software. Add Guided Learning, which Google says is informed by LearnLM and built around probing questions rather than answer vending, and the shape of the product becomes clearer: persistent context, multiple output formats, and a tutoring loop that tries to keep the human engaged in the reasoning process.

This is less a study tool than a prototype for knowledge work

Education is a convenient wrapper because the use case is intuitive and morally legible. Everyone understands the value of summarizing notes, generating practice questions, and visualizing difficult concepts. But the product pattern travels well beyond finals week. Replace “course materials” with onboarding docs, incident runbooks, competitive research, sales collateral, or customer support tickets, and you have a generic architecture for enterprise AI products in 2026. Ingest sources. Preserve context. Generate artifacts. Support guided iteration. Repeat.

That is the original analysis worth paying attention to. Google is quietly moving away from the stateless-chatbot era. The company appears to understand that a model answer, by itself, is not much of a moat. People forget disposable chats. Teams return to workspaces that remember the project, preserve the source material, and let them create reusable outputs without rebuilding context from scratch every morning.

The timing also says something about the NotebookLM relationship. For the last year, Google’s AI product line often looked like a collection of overlapping experiments looking for a hierarchy. Gemini was the assistant, NotebookLM was the source-grounded research tool, and various educational or multimodal capabilities lived in their own marketing lanes. Notebooks are Google’s clearest attempt yet to tell users that these products are part of the same system. If that unification holds, it is more strategically important than the launch copy suggests.

Google’s biggest opportunity, and its biggest packaging problem

There is, however, a very Google limitation lurking in the rollout details. Notebooks in Gemini are launching first to Google AI Ultra, Pro, and Plus subscribers on the web, with mobile, more European countries, and free users coming later. Google also notes restrictions around under-18, Workspace, and Education accounts in some surfaces. That means the product story is cleaner than the distribution story. The workflow is compelling, but the entitlement map is still messy enough to confuse users and slow habit formation.

Practitioners should not miss the lesson. Capability fragmentation is a tax on adoption. If your AI product requires users to remember which tier unlocks which context window, output mode, or collaboration surface, you are spending trust to preserve pricing complexity. Google may be able to absorb that because it controls so many adjacent surfaces. Smaller builders probably cannot.

There is another practical implication. Guided Learning’s design, which emphasizes open-ended questions and deeper understanding, is Google’s answer to a problem every AI product team now faces: if your system only optimizes for immediate answers, it trains users into shallow dependency. That can work for low-stakes productivity tasks, but it becomes a product and policy liability in learning-heavy environments. Google is effectively arguing that pedagogical structure is a feature, not a restraint. Builders in documentation, training, and onboarding should take that seriously. Asking a better follow-up question may create more long-term product value than delivering a slicker instant answer.

What engineers should actually do with this

If you build AI features, stop designing around prompts alone. Design around retained state. Ask whether your product can hold a curated source set, keep a project thread coherent over time, and produce artifacts that remain useful after the model call ends. If you run an engineering or product team, watch how your own workflows map onto this pattern. Many internal copilots still fail because they answer isolated questions well but do a terrible job of carrying context across a real project lifecycle.

If you compete in AI productivity, the takeaway is more uncomfortable. The frontier is shifting from “who has the smartest answer box” to “who owns the most useful workflow container.” Google is not winning that fight everywhere, and its product line still has edges showing, but the direction is correct. Persistent environments beat clever one-offs. Multimodal outputs beat text monoculture. Grounded context beats empty fluency.

The finals-week framing is the marketing wrapper. The real story is that Google is trying to turn Gemini into operating infrastructure for knowledge work. That is a much bigger ambition than helping students cram, and it is one that other AI vendors should already be taking personally.

Sources: Google Blog, 6 easy ways to study for finals with Gemini, Google Blog, Try notebooks in Gemini to easily keep track of projects, Google Blog, The Gemini app can now generate interactive simulations and models, Google Blog, Guided Learning in Gemini: From answers to understanding